The clinics that could benefit from AI the most might be the least equipped to govern it safely

⏱️ 5 minute read

Federally qualified health centers, or FQHCs, are community-based clinics that care for underserved patients, often regardless of ability to pay. Most people outside healthcare policy have never heard of them.

But if you care about whether AI in healthcare will actually help patients rather than just sound nice, FQHCs are one of the most important places to pay attention to.

Why? Because they sit at the center of a hard paradox: The healthcare organizations that may benefit the most from AI are often the least equipped to use it safely.

That is not because they are careless or resistant to innovation. It’s because they operate under some of the hardest conditions in American healthcare:

• Chronic staffing pressure

• Heavy documentation burden

• Fragmented care histories

• Limited bandwidth for technical projects

And patients whose lives are often shaped by transportation barriers, insurance churn, housing instability, language mismatch, and limited access to specialty care.

In theory, AI sounds tailor-made for that environment. Tools for documentation, inbox triage, no-show prediction, referral prioritization, and risk stratification all promise to save time and focus scarce resources.

In practice, those same settings expose a problem that the broader AI conversation often skips over: safe deployment depends on both good data and strong governance, and safety-net clinics often have the least access to both.

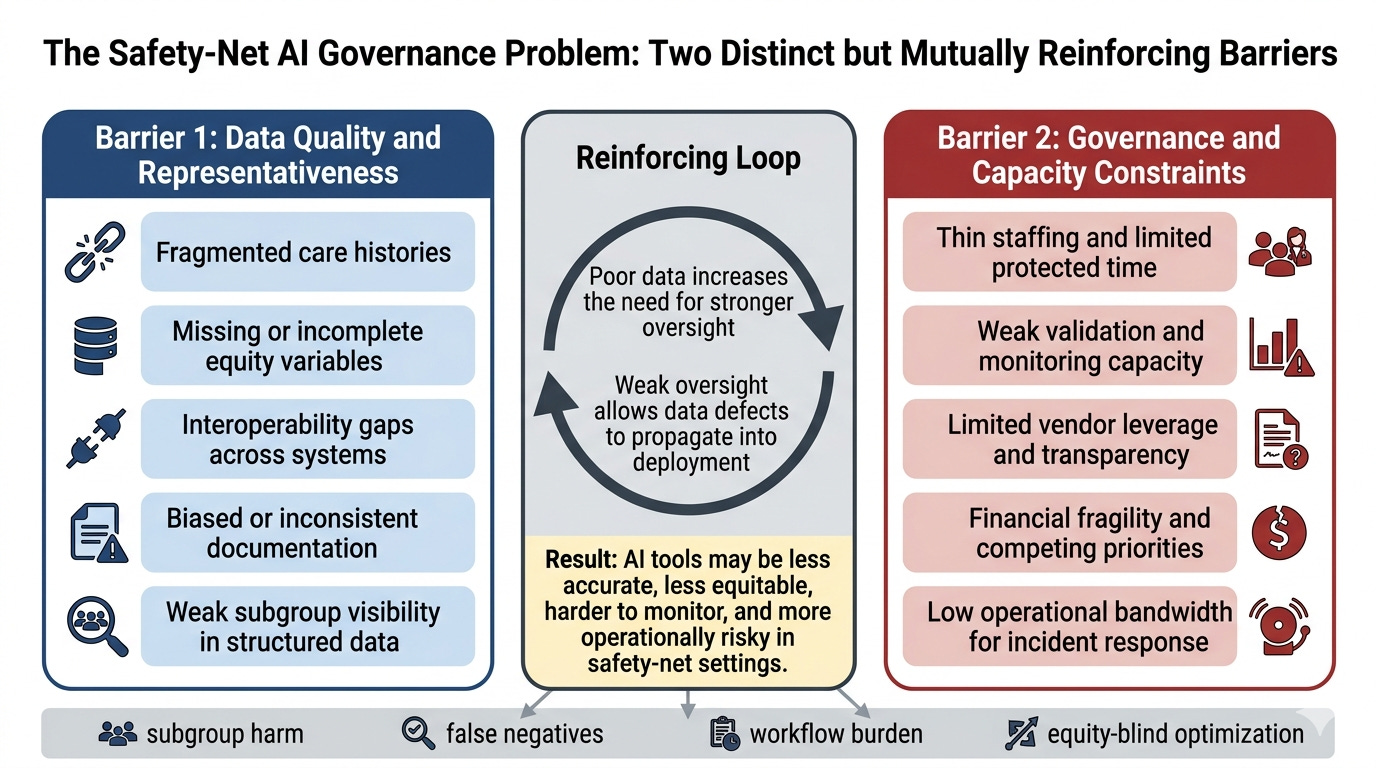

Barrier #1: The Data Problem

When people hear “bad data,” they often imagine a technical issue like missing fields, messy spreadsheets, or inconsistent formatting.

But in safety-net settings, bad data is a social and operational issue before it is a technical one.

A patient may receive care in multiple disconnected places. Their insurance may lapse and restart. Important information may live in outside records, claims systems, or narrative notes instead of structured fields. A portal-based digital trail may be thin or absent. Social risk factors that strongly shape care may be inconsistently documented or not documented at all.

That means the issue is not only having less data than large academic medical centers. It’s having data that may be fragmented, incomplete, differently generated, and weakly representative of the actual population the clinic serves.

This is really important, because AI systems are only as trustworthy as the data and workflows they rely on.

A no-show model trained in a well-resourced setting may interpret missed appointments very differently from a clinic serving patients with transportation barriers, unstable work schedules, or intermittent phone access.

A referral-prioritization tool may look neutral on paper while quietly reflecting gaps in who gets referred, documented, or followed up in the first place.

An ambient documentation tool may perform well in polished product demos but struggle in multilingual, high-interruption, high-complexity visits.

Barrier #2: The governance-capacity problem

Even if a tool seems promising, someone has to ask hard questions before (and after!) it goes live.

What evidence did the vendor provide? Was the tool validated anywhere remotely similar to this clinic? Who is checking whether it works differently across patient groups? Who reviews incidents after launch? Who notices when a silent update changes behavior? Who has the authority to pause or shut the tool off?

That is what governance actually means in practice. It is not just a policy document or a one-off trial period. It is staffing, technical access, continuous monitoring, contract leverage, incident response, and operational control.

And that is exactly where many safety-net organizations are thinly resourced. Their IT and informatics teams are often already stretched maintaining core systems. Analytics support may be limited or shared. Legal and procurement leverage may be weaker than in large health systems. Protected time for monitoring and post-implementation review may barely exist.

In other words, the places that may need the most careful local oversight may have the least spare capacity to perform it.

What makes this especially important is that these two barriers do not merely coexist. They reinforce each other.

Poor data increases the need for stronger oversight, because the local risks are harder to predict from vendor claims alone. Weak oversight makes poor data more dangerous, because the organization may not have the time, tools, or leverage to catch failures once the system is deployed.

That interaction illustrates a broader lesson for healthcare AI: governance cannot be treated as a luxury add-on for under-resourced settings. If anything, those settings need more disciplined governance, not less.

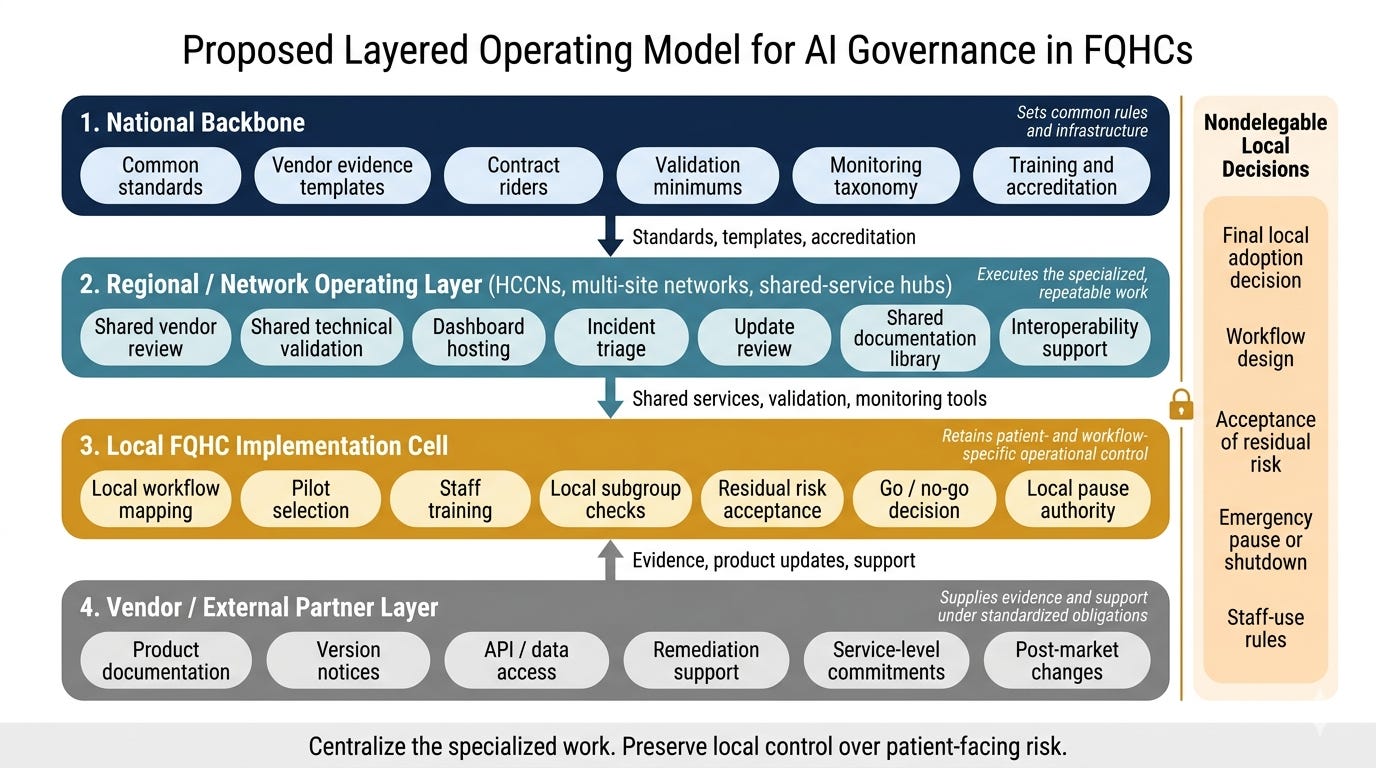

But asking every community clinic to build a full AI governance program from scratch is unrealistic.

That is why I think the most important strategic question is whether we are willing to build the kind of shared governance infrastructure that would let them use it safely.

That would mean shifting some of the specialized work away from each individual clinic and into shared support structures: common vendor review, shared validation support, common monitoring frameworks, standard documentation, and reusable governance tools.

But, critically, it would also mean keeping certain decisions local. Because no outside entity can fully substitute for real knowledge of a clinic’s patients, workflows, and staffing requirements.

So the answer is not full centralization, and it is also not “make every clinic figure it out on their own.” It is something harder and more realistic: shared infrastructure plus local control in tandem.

That may sound like a niche governance problem. It is not.

Safety-net clinics are where many of the strongest claims about healthcare AI meet the messiest realities of healthcare delivery.

If an AI system cannot be governed well in settings with fragmented data, limited staffing, and high social complexity, we should be more skeptical of broad claims that the tools are “ready” for general use.

And if we want AI to improve care rather than just reward the best-resourced organizations, then governance has to be designed for the hardest settings, not just the easiest ones.

The real test of healthcare AI is its responsible implementation where the need is high, the operating margins are thin, and the human consequences of failure are hardest to absorb.

In summary: If we want AI to work in the places that need it most, we have to stop acting as if every clinic can build a full governance system by itself.

Thanks for reading until the end! I’m working on a white paper that I plan to publish. Hopefully, this will help create an AI governance infrastructure that lifts up underserved populations rather than leaving them further behind. Stay tuned!

“And if we want AI to improve care rather than just reward the best-resourced organizations, then governance has to be designed for the hardest settings, not just the easiest ones.”

really well said here. enjoyed this read a lot. this now one of the fields i’m most eager to see properly adopt ai.

I’m working (overstated…lol) on an app for clinics and govt. in Honduras…feel free to have a look. It’s really far from completion though…

https://github.com/lostlilbot/Medpak

https://medpak-5559.d.kiloapps.io/