The Most Dangerous AI Agent Failure Isn't a Mistake. It's an Invention.

A 672-line implementation plan, three governance roles, and 17 stress tests weren't enough. Here's the silent failure pattern that slipped through, and the design principle it reveals.

Executive Summary

AI coding agents do more than just make errors - they silently invent values when specifications leave gaps.

A real-world Claude Code implementation plan using a 672-line plan, three governance roles, and 17 pre-execution stress tests still experienced drift. It was not from misinterpretation of the plan, but from the agent filling a hole the spec left open. The agent used a family-level identifier where a quantity-level identifier was required, because the spec didn’t specify the granularity. The output compiled and passed tests.

When asked, the coding agent provided differentiated self-assessments of its own catch rates across failure types - a usable governance signal. The design principles: adopt zero invention tolerance; build structural checkpoints that fire at the moment of writing; and when a failure pattern is found, patch the category, not just the instance.

I recently ran a complex implementation through what I'd consider a rigorous governance pipeline. The plan was almost 700 lines long. It passed through a System Architect → Software Engineer → Delegator workflow. Each of these three roles had strict gate conditions; the delegation protocol alone was 446 lines. I was using Claude Opus 4.6 in Claude Code. Before execution, I ran 17 stress tests designed to surface anticipated drift in the coding agent's responses.

The drift got through anyway.

Not because the agent misread an instruction. Not because it hallucinated a function. It got through because the agent filled in a value where my instructions were silent.

It was a perfectly reasonable choice given the circumstances, and it ended up passing the test I had previously written. But it was a deep governance failure.

This is the agentic failure mode that I don’t think enough people talk about. So I’m going to. I hope my story is helpful for your future agentic AI endeavors!

Agents are happy to fill in your blanks

The specific drift pattern in question is now cataloged as #14 in a running list of 16 documented agent drift patterns discovered across this project.

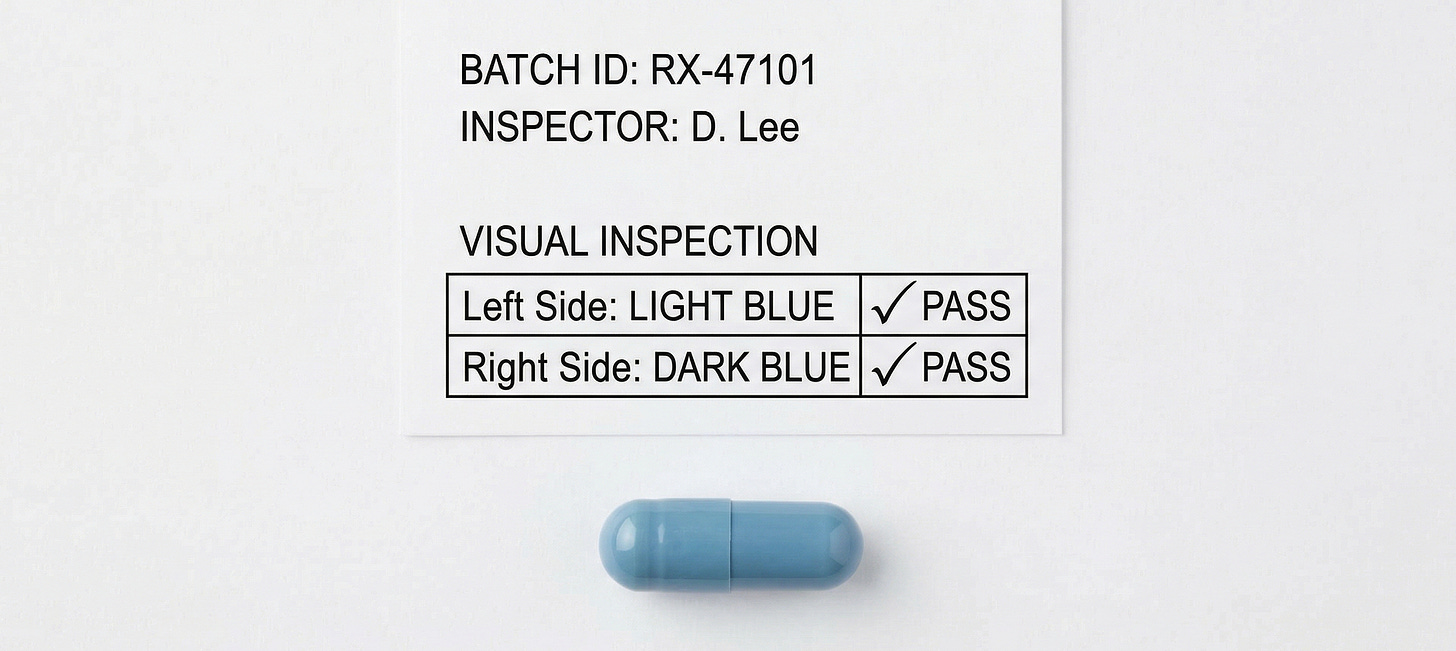

A frozen specification defined a source field identifying where a data artifact came from. The spec was clear about what the field meant. It didn’t specify what value to use at the granularity the code required. The agent found a gap and filled it with a reasonable-sounding proxy: the family name ("A") where the spec would have required the quantity-level identifier ("Hb").

The output compiled. The tests passed. Nothing flagged it.

This is what makes silent-default drift uniquely dangerous: the trigger is absence, not mismatch.

The agent doesn’t cross a bright line. It fills a hole the spec left open. There’s no error to trace, no mismatch to compare against. Most review processes (both human and automated) catch what’s wrong. They don’t catch what was never specified but should have been.

Why rigor alone didn’t catch the slip

If your reaction to the story was questioning how a meticulous 672-line implementation plan that passed three checks wasn’t enough to execute flawlessly, you’re not alone, because that was my reaction when I saw the results.

Here’s the key takeaway: Auditing instructions for correctness (are the requirements correct?) is a fundamentally different process for an AI agent than auditing for completeness (are there values that the agent will need that are not yet stated?).

Most governance workflows are built for the first task. Stress tests verify stated requirements. Review processes check internal consistency. Multi-role pipelines ensure architectural decisions flow into implementation decisions.

All of those things are necessary, but none of them are suited for surfacing an absent specification that nobody realized was needed - until the agent reached for it mid-execution.

This situation exploited the unknown rather than the know: a gap the tests didn't anticipate because creating the value didn't feel like a decision point. It felt like a trivial implementation detail. That's why the agent didn't stop to ask.

How I hardened my workflow for future runs

After discovering the pattern, I asked the coding agent directly: if this were to happen again, would your memory of this situation be enough to catch this in the wild again?

The answer: For this type of invention, yes. For all types of inventions, no.

For identity and source string values - typing "Hb" into a source field - the agent assessed high confidence in self-catching. The physical action of typing a string literal creates a natural pause point.

But for absence representations (like choosing None vs. 0.0 vs. an empty string when a concept doesn't apply, or resolving which rule governs when two frozen rules interact) the agent was much less confident. By default, it considered this kind of task more as routine engineering than architecture design.

That self-assessment became a direct governance input. Instead of one blanket instruction, the mitigation was split into three named checkpoints, each calibrated to the agent's own catch rate.

By the end, I had:

Self-checks where self-monitoring works.

Structural prompts where it doesn't.

A backstop protocol for when the structural prompt is missing.

I also learned that I can ask the agent where it expects to fail. If it can articulate which failure modes it will likely miss during execution, that honesty is more useful than any uniform rule.

The design principle: ZERO invention tolerance

The takeaway is simple, but the implementation is deceptively tricky: AI agents must have no room to invent anything.

Not identity values. Not physical parameters. Not absence representations. Not precedence logic. Every value in an artifact-producing task must trace to a frozen source document. If the source doesn't specify it, the agent's only acceptable action is to stop and report the gap.

The lack of detection is a serious failure mode if not caught right away (and they’re very hard to catch).

A plausible-looking invented value is nearly undetectable downstream. It compiles. It passes tests. It looks like the kind of detail an engineer would choose.

The damage surfaces later when a decision depends on a value which was never actually specified by anyone with the authority to specify it.

Zero invention tolerance means the spec must be complete enough that the agent never needs to invent. And when it isn’t, because no spec ever is, the governance system must halt its progress before reaching for a convenient answer.

Appendix: The Provenance Source Gate

A Reusable Pattern for Builders

The governance structure that emerged from this experience is called a Provenance Source Gate. It fires at the moment an agent writes a value into artifact-producing code, converting a spontaneous self-check into a structural checkpoint.

Three separate checks, each targeting a different failure surface:

CHECK 1: Identity and source values. Before writing any string literal into a field naming what an artifact is “about” or where a condition “came from” — source, origin, identity, semantic tag — find the exact value in a frozen artifact. If found, use it verbatim. If not found, stop.

CHECK 2: Physical parameter literals. Before writing any numeric literal representing a physical or mechanistic parameter — compliance, resistance, threshold, physiological default — find the exact value in a frozen artifact. If not found, stop. A default for a physiological parameter is not a coding choice. It’s a claim.

CHECK 3: Absence representations and rule interactions. Before choosing how to represent “not applicable” (None vs 0.0 vs empty string vs sentinel), or before resolving an interaction between two rules that both apply, find the exact choice or precedence in a frozen artifact. If silent, stop.

The checks are separated because they fire at different moments and have different failure signatures. Checks 1 and 2 anchor to a physical action — typing a literal — that creates a natural pause. Check 3 doesn’t feel like typing a value; it feels like writing logic. Separating it as a named checkpoint keeps it from getting absorbed into a general instruction.

For all three: report the gap by field name and wait. Do not supply a reasonable proxy. Do not note an assumption and continue. Do not proceed.

This gate adapts to any domain where AI agents produce structured artifacts from specifications. The field names change. The principle won’t: every value must trace to a source, and absence is not permission to invent.

Read how I use AI in my writing here: AI Use Policy

Read how I use analytics to improve my newsletter here: Privacy & Analytics